On Scaling Software Companies

Lessons learned from observation and history

I'm a Chilean Software Engineer living in the United Kingdom, specifically in the cold and beautiful north coast of Northern Ireland. I moved here in March 2020 -- yes, at the very start of a global pandemic! -- with my wife Briony and our cat Pua to be closer to her side of the family.

Originally a History B.A., I made the career switch around 2015 once I discovered how fun and intellectually stimulating Engineering is. Apart from building useful stuff other people can use, I love sharing what I've learned and hearing what others have learned too.

There are many definitions of scaling around there, but I like this very simple one:

Scaling a business means setting the stage to enable and support growth.

The goal is growth (who doesn't want their business to grow?), however, the key idea here is that growth is something that needs enablement and support. The process of creating those supporting and enabling structures is called scaling.

In most traditional businesses, scaling is a linear equation: to get more output (growth) we need more input (people, raw materials, machines, refined processes, etc). In other words, growth is achieved through having more people and assets for production, and this relationship is directly proportional: more growth, means you need more people and assets.

This is obvious, for a business like McDonald's to grow, they need more restaurants in crowded areas, with proper machinery and the proper staff. That costs money, but makes more money than it costs: revenue is what matters.

The problem is that software companies are not your traditional company. They are a weird mix between a service and a product company. Software is, in some extraordinary ways, both tangible and intangible. The source code is tangible and it is produced once, but the result of it (the built software), makes it more like a service since it can be replicated and sold multiple times in a SaaS manner, for instance.

For these kinds of companies, scaling in linear terms is usually the reason for their ruin. If you are a CEO, a CFO, or a CTO of a Software company and think that to double your revenue you need to double your engineering team size, you are making the biggest mistake of your life, and it will cost you that company. This is because the engineering departments and the costs associated with it are probably the biggest hit to your revenue. Engineers are expensive (especially if you want good ones), and computing is expensive (especially if you have poorly designed software). You can't scale engineering linearly.

The best way of scaling a software company is exponentially: with an extra one or two engineers you can deliver 2, 5, or 10 times as much. This is not because you are suddenly hiring 10x developers, but because you have mastered the art of making what seemed complicated, simple; by means of continuous improvement.

A story of two platforms

I used to work for a company that was in the business of making integrations between different lenders and merchants. Version one of the platform was a monolithic application that had grown super complex over time due to poor coding standards, lack of testing, coupling, and other things. Now, the core idea of that platform was really good. It served as a hub where all the providers were implemented and setting up a connection between them usually involved copying a few classes and implementing a few interfaces, plus configuring some parameters like API keys and others. The tricky bit happened when something custom needed to be made for a particular integration and that wasn't supported by the abstractions that powered the model. But I would say that to overcome those difficulties it just needed a big refactor or probably a rewrite taking into account the new domain knowledge gained, but following the same underlying idea. The platform took you 90% there, you just needed that extra 10%.

Version two of the product was a suite of REST-powered microservices coordinated by a workflow engine. This was built by a team that was brought after some big round of investment. Now, every integration had a unique workflow, with unique steps and a unique UI. Technically, it solved the missing 10% problem of customization, but at a big price. Because everything was their own service, we spent ages writing glue code, API clients, and investing in expensive and complex acceptance test suites to make sure we had wired all the parts of an integration correctly, and that errors were handled properly and propagated accordingly. If that is hard enough to do in a monolith, imagine doing it in a distributed one. Distributing our services might have solved some issues, but it made delivery 10 times slower. To integrate something wasn't copying some classes anymore, but defining a workflow (it was complex enough!), its suite of services, deploying it, writing acceptance tests, and setting up observability, all this across 4 environments with multiple codebases.

Looking back, we should have gone the route that allowed us to do more with less effort. Sometimes that route covers 90% of the use case, and clever engineering is needed for the rest 10%. But I felt we gave up the 90% for a tenner.

The commercial results spoke for themselves. With its defects, the first platform still yielded most of the company revenue. The new platform had only one successful project that took almost two years and didn't provide any substantial increase in revenue, and that could have been done in the old platform anyway. It failed to deliver a big project due to overcomplexity caused by overcustomization. All this with an engineering department twice as big. Duplicated head count didn't yield the expected revenue growth, because someone got the vision and the strategy wrong.

The most important metric

This is why, as a CTO, your most important metric is delivery speed. How fast I can go from idea to production, is the most important thing.

Now, some CTOs know this, but they misread most important for only important. What I mean is that they focus only on delivery speed, but they never achieve it, because delivery speed is affected by a myriad of things that they are not worrying about.

Poor Developer Experience is probably the biggest factor. If you have a system that is hard to understand, hard to test, and hard to change, where to do something simple you need to go and figure out where Lt. Bello was lost, then your delivery will be slow. Or if developers spend a lot of time writing boilerplate code that could be automated, that's another time waste. In short, the easier it is for developers to do their job, the better. Investing in tools to aid with setting up environments, automating trivial things, and generating boilerplate is the best investment of time you can make apart from building actual features.

If your codebase is frustrating to work with, you'll have high rates of engineer turnover, which in turn will affect your delivery speed. Reduce incidental complexity. Strive to make things as simple as they can be, and don't let yourself be fooled by shiny trendy stuff. I've seen the SPA + Microservices revolution, and all the complexity it brought, and now we are going the way back. Just make sure that what you are doing works for you and your product and that you are always solving the right problems.

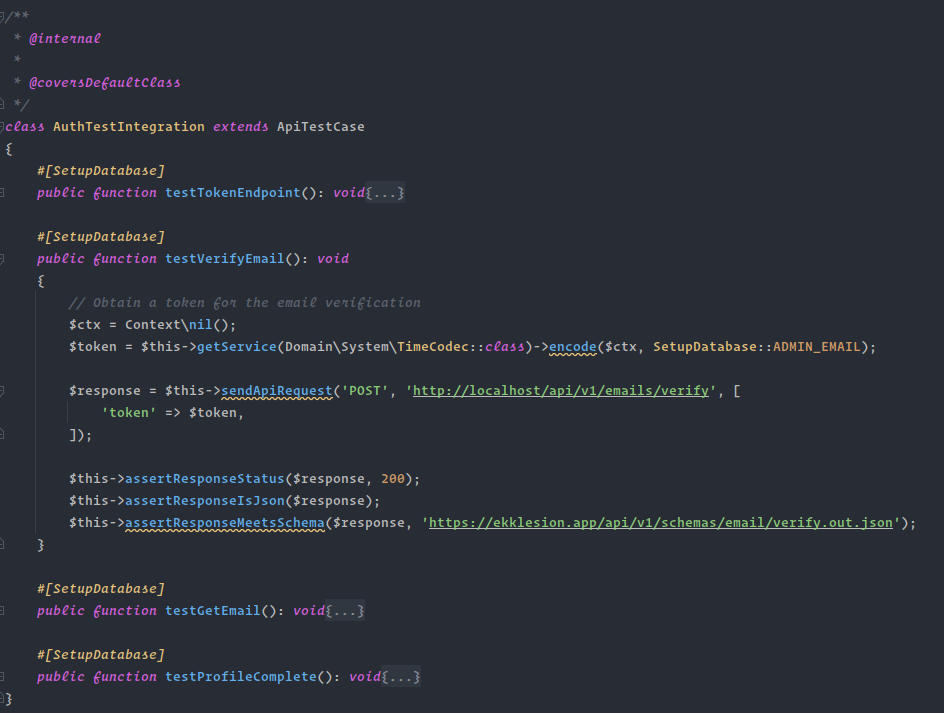

Testing is another area. You need to make sure your test suite runs as fast and reliable as possible. Slow and flaky tests are extremely bad for your delivery speed. And when something breaks, the why should be extremely obvious, and not some weird stack trace full of noise that no one can make sense of. You don't need a massive QA team if you develop a product that is easier to test. Extend your testing frameworks with custom stuff so you can write more tests with less code. For instance, this test in one of my projects uses a custom PHPUnit extension that resets the database when the SetupDatabase attribute is present. It also uses custom assertions for testing APIs.

Sometimes, complexity makes its way in and there is no way back. But then investment in onboarding and training should follow suit. Make sure you take proper time to explain how your system works to new joiners, why things work the way they do, and who knows, maybe they will have one idea or two to improve things. And give them the time to do so!

Code integration, review, and deployment should be as automated as possible through a CI/CD pipeline. Make sure it runs fast and makes extensive use of caching. If it needs to be super custom and vendor-dependent, so be it. Is better that it runs fast on one vendor than slow on any vendor.

But above everything else, be a problem seeker and solver. Always ask yourself the question: "What is in the way of my team delivering faster, with less effort?". That should be the question in every refinement too: "How can we do more with less?". That's the key.

A theatrical analogy

There is probably nothing more irritating to CEOs than spending time on things that do not deliver product features. The words migration or refactoring terrorize them to no end. And to some degree, I understand. After all, you are not delivering any features while your competitors are. But sometimes, to keep on moving, you have to take a few moments to catch your breath.

Let's go back to the definition of scaling given at the beginning.

Scaling a business means setting the stage to enable and support growth.

I have the privilege of going to London often for work and every time I go I try to squeeze in a visit to the theatre to watch a musical. I've watched Hamilton, Come From Away, and a few others (thank you lottery tickets!).

Can you imagine a musical that does not have a stage? Would it be the same? Would it cause the same impact? Would it convey the same meaning and emotions? I don't think so. Every musical needs a stage. Setting the stage is an integral part of the musical's success, although that does not happen during the musical show itself. While they are setting the stage, they are not getting paid. Is when people go to see the result of that effort that the revenue comes.

In the same way, investing in refining to the maximum your delivery is integral to the success of your software company and product. It will take time away from product work, but it will pay off. A good, smooth, delivery process will always give you the upper hand against any competition you might have.

If you can do more than your competition with less (less costs, less time, less effort) then you probably have 50% of the battle won. The other half is just good ideas (Product), customer relations (Sales), putting yourself out there (Marketing), and good talent to bring into those (HR). But if your engineering cannot do more than your competition with less, then it does not matter how much you pour into the other areas: your company will stagnate sooner or later because you won't be able to deliver.

The Industrial Revolution

Scaling is about solving problems that enable growth, not just growing. If you solve the problems, the growth will come. Let me illustrate this with another example.

When I was at University, one of my favorite topics was the Industrial Revolution and its causes. I was so interested in it that I wrote a few articles about it for the Uni's history magazine, most of them expounding the thesis of an author whose name and book I've long forgotten - I tried to google a bit to see if I could find the book, but I couldn't.

if memory serves me, in this book, this author noticed that all the prime materials and knowledge available for the revolution were in Great Britain's possession at least 400 years before the Industrial Revolution took place. They already produced iron and had people who knew how to work it, they had coal and they could burn it in a controlled fashion, and most certainly had some experience with pumping systems and mechanical concepts like cranks, con rods, rotors other things (although all these systems were human or animal powered).

The author's thesis is that the revolution didn't start earlier not for a lack of materials, or knowledge or skill, but rather because demand wasn't high enough. Once demand outpassed supply, highly technical people started to press on to the objective of how to produce at a higher rate without employing twice as many people so they could meet the demand. They were trying to figure out how to deliver more, with less. That's why, once the main idea was developed, they improved upon and applied the steam machine concept to every possible thing under the sun: mills, water pumps, transport, and textiles.

This makes the Industrial Revolution a technical victory. It wasn't triggered by scientific people, but by skilled people who were tradesmen by profession. Thomas Newcomen, who improved Thomas Savery's steam machine, was a metal worker, and an itinerant preacher.

No one can deny that the Industrial Revolution has been the greatest scaling phenomenon the world has ever seen. At the core of it was the need to develop a production system that would enable the production of more things with fewer people, which would allow us to move faster, with less effort. That concept is key to innovation, and innovation is key to scaling and productivity.

https://youtu.be/xuCn8ux2gbs?feature=shared&t=890

Bottom line

The bottom line of all of this is surprisingly simple and repetitive. It all comes back to Agile. Move forward, look back, improve, repeat. If you keep on refining and improving, eliminating what makes you slow and unproductive, then future iterations will be more efficient and you will be able to accomplish more with less. If you see something that slows you down or blocks your team, even if that thing is the very product you are selling, stop and improve it. Spend some time setting the stage for what is to come. Build your steam machine so you can place it in your factory. It will pay off. It has always paid off.